In science, the term "entropy" is generally interpreted in three distinct, but semi-related, ways, i.e. Carathéodory linked entropy with a mathematical definition of irreversibility, in terms of trajectories and integrability. Later, scientists such as Ludwig Boltzmann, Willard Gibbs, and James Clerk Maxwell gave entropy a statistical basis. This was in contrast to earlier views, based on the theories of Isaac Newton, that heat was an indestructible particle that had mass. that no change occurs in the working body, and gave this "change" a mathematical interpretation by questioning the nature of the inherent loss of usable heat when work is done, e.g., heat produced by friction. In the 1850s and 60s, German physicist Rudolf Clausius gravely objected to this latter supposition, i.e. Accordingly, Carnot reasoned that if the body of the working substance, such as a body of steam, is brought back to its original state (temperature and pressure) at the end of a complete engine cycle, that "no change occurs in the condition of the working body." This latter comment was amended in his foot notes, and it was this comment that led to the development of entropy. This was an early insight into the second law of thermodynamics.Ĭarnot based his views of heat partially on the early 18th century "Newtonian hypothesis" that both heat and light were types of indestructible forms of matter, which are attracted and repelled by other matter, and partially on recent 1789 views of Count Rumford who showed that heat could be created by friction as when cannons bored. Building on this work, in 1824 Lazare's son Sadi Carnot published Reflections on the Motive Power of Fire in which he set forth the view that in all heat-engines whenever " caloric", or what is now known as heat, falls through a temperature difference, that work or motive power can be produced from the actions of the "fall of caloric" between a hot and cold body. In other words, in any natural process there exists an inherent tendency towards the dissipation of useful energy. The short history of entropy begins with the work of mathematician Lazare Carnot who in his 1803 work Fundamental Principles of Equilibrium and Movement postulated that in any machine the accelerations and shocks of the moving parts all represent losses of moment of activity. Rudolf Clausius - originator of the concept of "entropy" S Although the concept of entropy was originally a thermodynamic construct, it has been adapted in other fields of study, including information theory, psychodynamics, thermoeconomics, and evolution. The statistical definition of entropy is generally thought to be the more fundamental definition, from which all other important properties of entropy follow. In terms of statistical mechanics, the entropy describes the number of the possible microscopic configurations of the system. that energy which cannot be used for external work, then entropy may be (most concretely) visualized as the "scrap" or "useless" energy whose energetic prevalence over the total energy of a system is directly proportional to the absolute temperature of the considered system, as is the case with the Gibbs free energy or Helmholtz free energy relations.

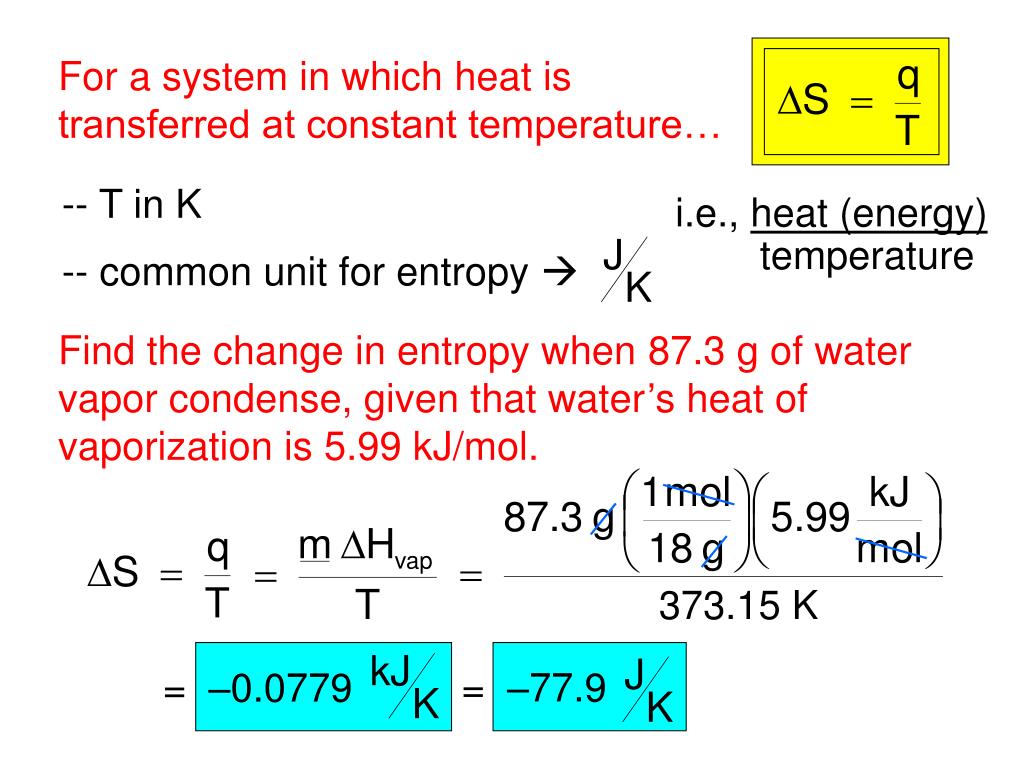

that used to push a piston), and its "useless energy", i.e. When a system's energy is defined as the sum of its "useful" energy, (e.g. Entropy is one of the factors that determines the free energy of the system. Quantitatively, entropy, symbolized by S, is defined by the differential quantity d S = δ Q / T, where δQ is the amount of heat absorbed in a reversible process in which the system goes from one state to another, and T is the absolute temperature. Entropy is an extensive state function that accounts for the effects of irreversibility in thermodynamic systems. In recent years, entropy has been interpreted in terms of the " dispersal" of energy.

Entropy change has often been defined as a change to a more disordered state at a microscopic level. In contrast the first law of thermodynamics deals with the concept of energy, which is conserved. Spontaneous changes occur with an increase in entropy. The concept of entropy in thermodynamics is central to the second law of thermodynamics, which deals with physical processes and whether they occur spontaneously.

Ice melting - classic example of entropy increasing described in 1862 by Rudolf Clausius as an increase in the disgregation of the molecules of the body of ice.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed